A New Three Object Triangulation Algorithm for Mobile Robot Positioning

Vincent Pierlot and Marc Van Droogenbroeck

INTELSIG, Laboratory for Signal and Image Exploitation

Montefiore Institute, University of Liège, Belgium

Scientific paper, referenced

[47], available in PDF:

here or

here

Keywords: mobile robot positioning, triangulation, algorithms, benchmarking, software,

C source code,

documentation, ToTal algorithm

Executive summary: the ToTal triangution algorithm.

Given three angles α1, α2, α3, find the location of the observer {xR, yR}

Given the three beacon coordinates

{x1, y1},

{x2, y2},

{x3, y3}, and the three angles

α1, α2, α3:

-

compute the modified beacon coordinates:

x’1 = x1 − x2, y’1 = y1 − y2, x’3 = x3 − x2, y’3 = y3 − y2,

-

compute the three cot(.):

T12 = cot(α2 − α1), T23 = cot(α3 − α2), T31 = (1 − T12T23)/(T12 + T23)

-

compute the modified circle center coordinates:

x’12 = x’1 + T12 y’1, y’12 = y’1 − T12 x’1,

x’23 = x’3 − T23 y’3, y’23 = y’3 + T23 x’3,

x’31 = (x’3 + x’1) + T31 (y’3 − y’1),

y’31 = (y’3 + y’1) − T31 (x’3 − x’1),

-

compute k’31:

k’31 = x’1x’3 + y’1y’3 + T31(x’1y’3 − x’3y’1),

-

compute D (if D = 0, return with an error):

D = (x’12 − x’23)(y’23 − y’31) − (y’12 − y’23)(x’23 − x’31),

-

compute the robot position {xR, yR} and return:

xR = x2 + (k’31(y’12 − y’23))/(D), yR = y2 + (k’31(x’23 − x’12))/(D).

Abstract

Positioning is a fundamental issue in mobile robot applications. It can be achieved in many ways. Among them, triangulation based on angles measured with the help of beacons is a proven technique. Most of the many triangulation algorithms proposed so far have major limitations. For example, some of them need a particular beacon ordering, have blind spots, or only work within the triangle defined by the three beacons. More reliable methods exist; however, they have an increasing complexity or they require to handle certain spatial arrangements separately.

In this paper, we present a simple and new three object triangulation algorithm, named ToTal, that natively works in the whole plane, and for any beacon ordering. We also provide a comprehensive comparison between many algorithms, and show that our algorithm is faster and simpler than comparable algorithms. In addition to its inherent efficiency, our algorithm provides a very useful and unique reliability measure that is assessable anywhere in the plane, which can be used to identify pathological cases, or as a validation gate in Kalman filters.

1 Introduction

Positioning is a fundamental issue in mobile robot applications. Indeed, in most cases, a mobile robot that moves in its environment has to position itself before it can execute its actions properly. Therefore the robot has to be equipped with some hardware and software capable to provide a sensory feedback related to its environment

[36]. Positioning methods can be classified into two main groups

[23]: (1)

relative positioning (also called

dead-

reckoning) and (2)

absolute positioning (or reference-based). One of the most famous relative positioning technique is the odometry, which consists of counting the number of wheel revolutions to compute the offset relative to a known position. It is very accurate for small offsets but is not sufficient because of the unbounded accumulation of errors over time (because of wheel slippage, imprecision in the wheel circumference, or wheel base)

[23]. Furthermore odometry needs an initial position and fails when the robot is “waken-up” (after a forced reset for example) or is raised and dropped somewhere, since the reference position is unknown or modified. An absolute positioning system is thus required to recalibrate the robot position periodically.

Relative and absolute positioning are complementary to each other

[36, 25] and are typically merged together by using a Kalman filter

[30, 18]. In many cases, absolute positioning is ensured by beacon-based triangulation or trilateration.

Triangulation is the process to determine the robot pose (position and orientation) based on angle measurements, while

trilateration methods involve the determination of the robot position based on distance measurements. Because of the availability of angle measurement systems, triangulation has emerged as a widely used, robust, accurate, and flexible technique

[27]. Another advantage of triangulation versus trilateration is that the robot can compute its orientation (or heading) in addition to its position, so that the complete

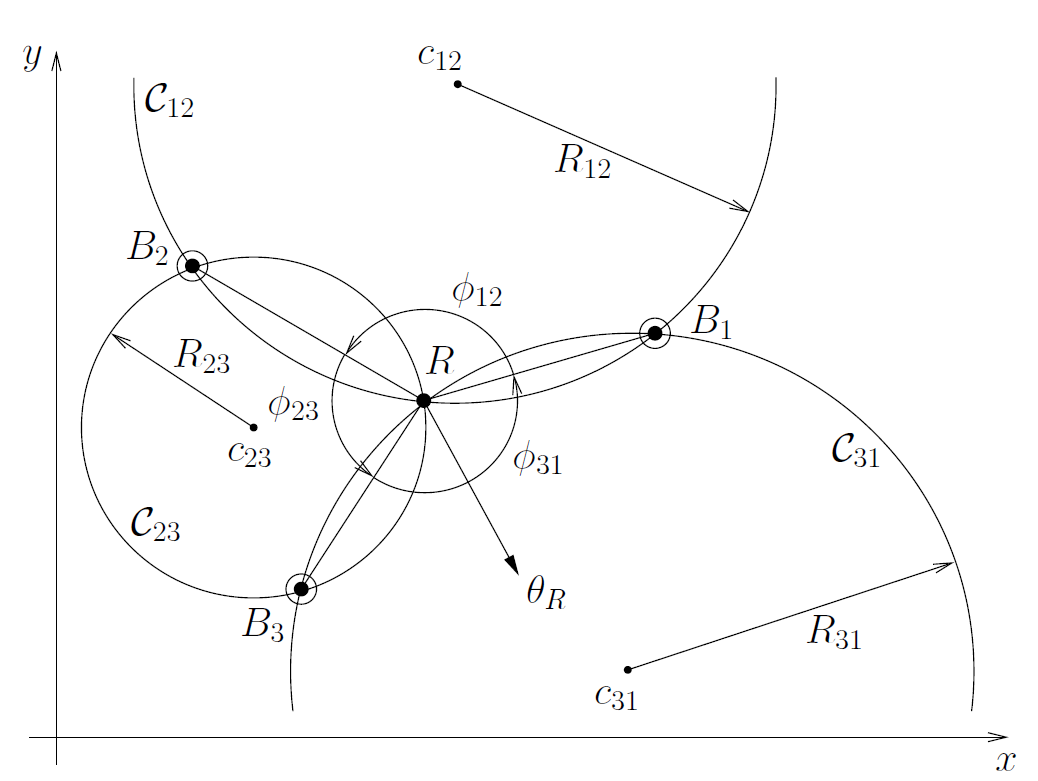

pose of the robot can be found. The process of determining the robot pose based on angle measurements is generally termed triangulation. The word triangulation is a wide concept, which does not specify if the angles are measured from the robot or the beacons, nor the number of angles used. In this paper, we are interested in self position determination, meaning that the angles are measured from the robot location. Figure

1↓ illustrates our triangulation setup.

Moreover, if only three beacons are used in self position determination, triangulation is also termed

Three Object Triangulation by Cohen and Koss

[5]. Here the general term

object refers to a 2-D point, whose location is known.

Our algorithm, named

ToTal hereafter, has already been presented in a previous paper

[46]. In this paper, we supplement our previous work with an extensive review about the triangulation topics, detail the implementation of our algorithm, and compare our algorithm with seventeen other similar algorithms. Please note that the C source code implementation, developed for the error analysis and benchmarks, is made available to the scientific community.

The paper is organized as follows. Section

2↓ reviews some of the numerous triangulation algorithms found in the literature. Our new three object triangulation algorithm is described in Section

3↓. Section

4↓ presents simulation results and benchmarks. Then, we conclude the paper in Section

5↓.

2 Related Work

2.1 Triangulation algorithms

The principle of triangulation has existed for a long time, and many methods have been proposed so far. One of the first comprehensive reviewing work has been carried out by Cohen and Koss

[5]. In their paper, they classify the triangulation algorithms into four groups: (1)

Geometric Triangulation, (2)

Geometric Circle Intersection, (3)

Iterative methods (Iterative Search, Newton-Raphson, etc.), and (4)

Multiple Beacons Triangulation.

The first group could be named Trigonometric Triangulation, because it makes an intensive use of trigonometric functions. Algorithms of the second group determine the parameters (radius and center) of two (of the three) circles passing through the beacons and the robot, then they compute the intersection between these two circles. Methods of the first and second groups are typically used to solve the three object triangulation problem. The third group linearizes the trigonometric relations to converge to the robot position after some iterations, from a starting point (usually the last known robot position). In the iterative methods, they also present Iterative Search, which consists in searching the robot position through the possible space of orientations, and by using a closeness measure of a solution. The fourth group addresses the more general problem of finding the robot pose from more than three angle measurements (usually corrupted by errors), which is an overdetermined problem.

Several authors have noticed that the second group (

Geometric Circle Intersection) is the most popular for solving the three object triangulation problem

[29, 9]. The oldest Geometric Circle Intersection algorithm was described by McGillem and Rappaport

[10, 9]. Font-Llagunes and Battle

[29] present a very similar method, but they first change the reference frame so to relocate beacon 2 at the origin and beacon 3 on the X axis. They compute the robot position in this reference frame and then, they apply a rotation and translation to return to the original reference frame. Zalama

et al. [16, 15] present a hardware system to measure angles to beacons and a method to compute the robot pose from three angle measurements. A similar hardware system and method based on

[16] and

[15] is described by Tsukiyama

[45]. Kortenkamp

[12] presents a method which turns out to be exactly the same as the one described by Cohen and Koss

[5]. All these methods compute the intersection of two of the three circles passing through the beacons and the robot. It appears that they are all variations or improvements of older methods of McGillem and Rappaport, or Cohen and Koss. The last one is described by Lukic

et al. [39, 37], but it is not general, as it only works for a subset of all possible beacon locations.

Some newer variations of Geometric/Trigonometric triangulation algorithms are also described in the literature. In

[27], Esteves

et al. extend the algorithm presented by Cohen and Koss

[5] to work for any beacon ordering and to work outside the triangle formed by the three beacons. In

[26, 34], they describe the improved version of their algorithm to handle the remaining special cases (when the robot lies on the line joining two beacons). They also analyze the position and orientation error sensitivity in

[34]. Whereas Easton and Cameron

[2] concentrate on an error model for the three object triangulation problem, they also briefly present an algorithm belonging to this family. Their simple method works in the whole plane and for any beacon ordering. The work of Hmam

[17] is based on Esteves

et al., as well as Cohen and Koss. He presents a method, valid for any beacon ordering, that divides the whole plane into seven regions and handles two specific configurations of the robot relatively to the beacons. In

[8], Madsen and Andersen describe a vision-based positioning system. Such an algorithm belongs to the trigonometric triangulation family as the vision system is used to measure angles between beacons.

It should be noted that the “three object triangulation problem” is also known as the “three point resection problem” in the surveying engineering research area. In this field, the beacons are often called stations, and the angles (or azimuths) are measured with a goniometer. As it is a basic operation for decades in the surveying field, there are lots of procedures (more than

500) to solve this problem, numerically as well as graphically

[31]. Surprisingly, there is almost no link between these two fields, except the recent work of Font-Llagunes and Batlle

[31], and the older one of McGillem and Rappaport

[10, 9]. Therefore, it appears that some algorithms from the two fields are similar, but wear different names. One of the most famous and oldest procedures is called the Kaestner-Burkhardt method, which is also known as the Pothonot-Snellius method

[42]. This method is similar to the one described by McGillem and Rappaport

[10, 9], which is a trigonometric approach. Then, there is the Collins method

[42], which is a trigonometric solution, close to the one described by Esteves

et al. [34], or Cohen and Koss

[5]. In addition, there is the Cassini method

[42], similar to the method of Easton and Cameron

[2], both being a trigonometric approach. Finally, there is the Tienstra method

[42, 32], which is a completely different approach, since it makes use of the barycentric coordinates in order to express the robot position as a weighted sum of the beacons’ coordinates. This method has been known for a long time; Porta and Thomas have presented a new concise proof for this method recently

[32]. Despite that the three point resection problem is known for a long time and has many solutions, some newer works are still emerging. For example, Font-Llagunes and Batlle

[31] have published a new method, which uses straight lines intersection and the property of similar triangles. To our knowledge, the most recent work has been carried on by Ligas

[38]. Both Ligas’s method and

ToTal rely on the idea of using the radical axis of two circles. However, Ligas intersects one radical axis and one circle, whereas our algorithm intersects the three radical axes of the three pairs of circles. Likewise, Ligas also uses only two trigonometric functions (like our method

ToTal), and as a consequence, it is one of the most efficient methods (with

ToTal), as shown in Section

4.2↓.

Some of the Multiple Beacons Triangulation (multiangulation) algorithms are described hereafter. One of the first work in this field was presented by Avis and Imai

[11]. In their method, the robot measures

k angles from a subset of

n indistinguishable landmarks, and therefore they produce a bounded set a valid placements of the robot. Their algorithm runs in

O(kn2) if the robot has a compass or in

O(kn3) otherwise. The most famous algorithm was introduced by Betke and Gurvits

[36]. They use an efficient representation of landmark 2D locations by complex numbers to compute the robot pose. The landmarks are supposed to have an identifier known by the algorithm. The authors show that the complexity of their algorithm is proportional to the number of beacons. They also performed experiments with noisy angle measurements to validate their algorithm. Finally, they explain how the algorithm deals with outliers. Another interesting approach is proposed by Shimshoni

[22]. He presents an efficient SVD based multiangulation algorithm from noisy angle measurements, and explains why transformations have to be applied to the linear system in order to improve the accuracy of the solution. The solution is very close to the optimal solution computed with nonlinear optimization techniques, while being more than a hundred times faster. Briechle and Hanebeck

[35] present a new localization approach in the case of relative bearing measurements by reformulating the given localization problem as a nonlinear filtering problem.

Siadat and Vialle

[4] describe a multiangulation method based on the Newton-Raphson iterative method to converge to a solution minimizing an evaluation criterion. Lee

et al. [6] present another iterative method very similar to Newton-Raphson. Their algorithm was first designed to work with three beacons, but it can also be generalized to a higher number of beacons. The initial point of the convergence process is set to the center of the beacons, and good results are obtained after only four steps.

Sobreira

et al. [20] present a hybrid triangulation method working with two beacons only. They use a concept similar to the

running-fix method introduced by Bais in

[1], in which the robot has to move by a known distance to create a virtual beacon measurement and to compute the robot pose after it has stopped to take another angle measurement. In

[19], Sobreira

et al. perform an error analysis of their positioning system. In particular, they express the position uncertainty originated by errors on measured angles in terms of a surface. Sanchiz

et al. [33] describe another multiangulation method based on

Iterative Search and circular correlation. They first compute the robot orientation by maximizing the circular correlation between the expected beacons angles and the measured beacons angles. Then, a method similar to

Iterative Search is applied to compute the position. Hu and Gu

[18] present a multiangulation method based on Kohonen neural network to compute the robot pose and to initialize an extended Kalman filter used for navigation.

2.2 Brief discussion

It is difficult to compare all the above mentioned algorithms, because they operate in different conditions and have distinct behaviors. In practice, the choice is dictated by the application requirements and some compromises. For example, if the setup contains three beacons only or if the robot has limited on-board processing capabilities, methods of the first and second groups are the best candidates. Methods of the third and fourth groups are appropriate if the application must handle multiple beacons and if it can accommodate a higher computational cost. The main drawback of the third group is the convergence issue (existence or uniqueness of the solution)

[5]. The main drawback of the fourth group is the computational cost

[36, 35, 22].

The drawbacks of the first and second group are usually a lack of precision related to the following elements: (1) the beacon ordering needed to get the correct solution, (2) the consistency of the methods when the robot is located outside the triangle defined by the three beacons, (3) the strategy to follow when falling into some particular geometrical cases (that induce mathematical underdeterminations when computing trigonometric functions with arguments like

0 or

π, division by

0, etc), and (4) the reliability measure of the computed position. Simple methods of the first and second groups usually fail to propose a proper answer to all of these concerns. For example, to work in the entire plane and for any beacon ordering (for instance

[34]), they have to consider a set of special geometrical cases separately, that results in a lack of clarity. Finally, to our knowledge, none of these algorithms gives a realistic reliability measure of the computed position.

2.3 Other aspects of triangulation

For now, we have focused on the description of triangulation algorithms which are used to compute the position and orientation of the robot. Other aspects of triangulation have to be considered as well to achieve an optimal result on the robot pose in a practical situation. These are: (1) the sensitivity analysis of the triangulation algorithm, (2) the optimal placement of the landmarks, (3) the selection of some landmarks among the available ones to compute the robot pose, and (4) the knowledge of the true landmark locations in the world and the true location of the angular sensor on the robot.

Kelly

[3] uses the famous Geometric Dilution of Precision (GDOP) concept, used in GPS error analysis, and applies it to range based and angle based positioning techniques. He derives two useful formulas in the case of two beacons. Madsen

et al. [7] perform a sensitivity analysis of their triangulation algorithm. They use first order propagation of angle measurement errors through covariance matrix and Jacobian to derive the precision of location. Easton and Cameron

[2] present the same error analysis as that of Madsen

et al., but in addition to the angle uncertainty, they take into account the beacon location uncertainty. They also present some simulations for various beacons configurations as well as some metrics to evaluate their model’s performance.

Optimal landmark placement has been extensively studied. Sinriech and Shoval

[13, 43] define a nonlinear optimization model used to determine the position of the minimum number of beacons required by a shop floor to guarantee an accurate and reliable performance for automated guided vehicles (AGVs). Demaine

et al. [14] present a polynomial time algorithm to place reflector landmarks such that the robot can always localize itself from any position in the environment, which is represented by a polygonal map. Tedkas and Isler

[40, 41] address the problem of computing the minimum number and placement of sensors so that the localization uncertainty at every point in the workspace is less than a given threshold. They use the uncertainty model for angle based positioning derived by Kelly

[3].

Optimal landmark selection has been studied by Madsen

et al. [7, 8]. They propose an algorithm to select the best triplet among several landmarks seen by a camera, yielding to the best position estimate. The algorithm is based on a “goodness” measure derived from an error analysis which depends on landmarks and on the robot relative pose.

Having a good sensor that provides precise angle measurements as well as a good triangulation algorithm is not the only concern to get accurate positioning results. Indeed, the angle sensor could be subject to non linearities in the measuring angle range (a complete revolution). Moreover, the beacon locations that are generally measured manually are subject to inaccuracies, which directly affect the positioning algorithm. In their paper, Loevsky and Shimshoni

[21] propose a method to calibrate the sensor and a method to correct the measured beacon locations. They show that their procedure is effective and mandatory to achieve good positioning performance.

In the remainder of this paper, we concentrate on three object triangulation methods. Our paper presents a new three object triangulation algorithm that works in the entire plane (except when the beacons and the robot are concyclic or collinear), and for any beacon ordering. Moreover, it minimizes the number of trigonometric computations, and provides a unique quantitative reliability measure of the computed position.

3 Description of a New Three Object Triangulation Algorithm

Our motivation for a new triangulation algorithm is fourfold: (1) we want it to be independent of the beacon ordering, (2) the algorithm must also be independent of the relative positions of the robot and the beacons, (3) the algorithm must be fast and simple to implement in a dedicated processor, and (4) the algorithm has to provide a criterion to qualify the reliability of the computed position.

Our algorithm, named ToTal, belongs to the family of Geometric Circle Intersection algorithms (that is, the second group). It first computes the parameters of the three circles passing through the robot and the three pairs of beacons. Then, it computes the intersection of these three circles, by using all the three circles parameters (not only two of them, to the contrary of other methods).

Our algorithm relies on two assumptions: (1) the beacons are distinguishable (a measured angle can be associated to a given beacon), and (2) the angle measurements from the beacons are taken separately, and relatively to some reference angle

θ, usually the robot heading (see Figure

1↑). Note that the second hypothesis simply states that angles are given by a rotating angular sensor. Such sensors are common in mobile robot positioning using triangulation

[24, 23, 16, 6, 9, 48]. By convention, in the following, we consider that angles are measured counterclockwise (CCW), like angles on the trigonometric circle. Inverting the rotating direction to clockwise (CW) would only require minimal changes of our algorithm.

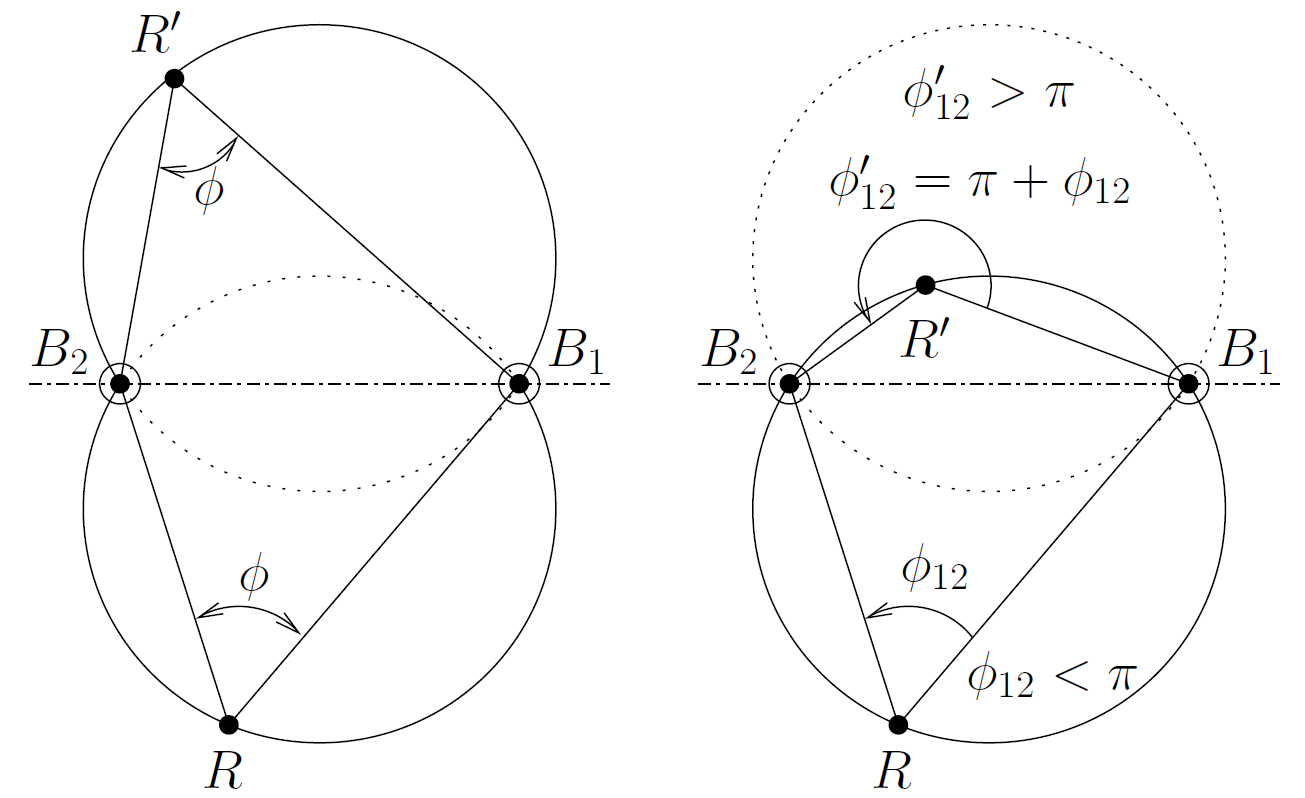

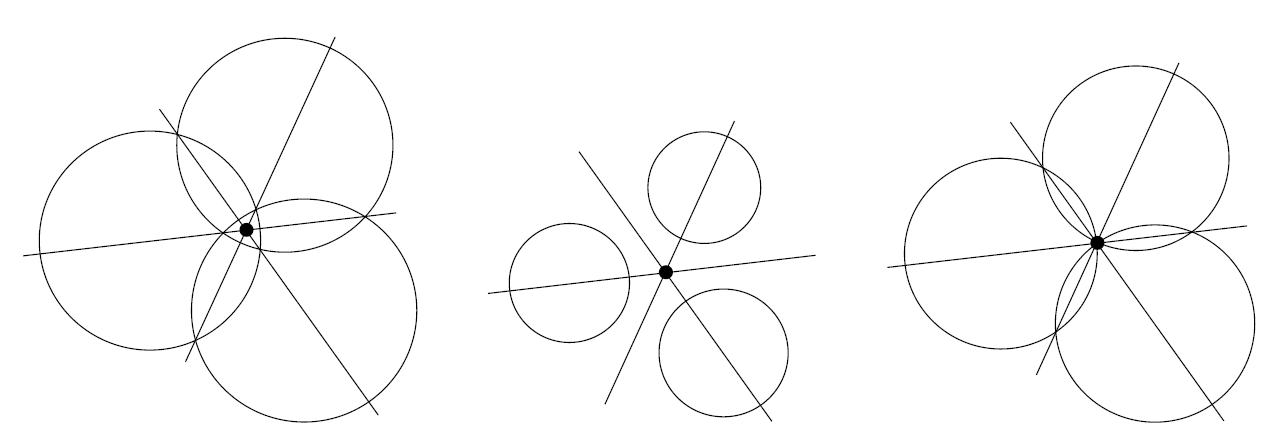

3.1 First part of the algorithm: the circle parameters

In a first step, we want to calculate the locus of the robot positions

R, that

see two fixed beacons,

B1 and

B2, with a constant angle

γ, in the 2D plane. It is a well-known result that this locus is an arc of the circle passing through

B1 and

B2, whose radius depends on the distance between

B1 and

B2, and

γ (Proposition 21 of Book III of

Euclid’s Elements

[44]). More precisely, this locus is composed of two arcs of circle, which are the reflection of each other through the line joining

B1 and

B2 (see the continuous lines of the left-hand side drawing of Figure

2↓).

A robot that measures an angle

γ between two beacons can stand on either of these two arcs. This case occurs if the beacons are not distinguishable or if the angular sensor is not capable to measure angles larger than

π (like a vision system with a limited field of view, as used by Madsen

et al. [8]). To avoid this ambiguity, we impose that, as shown in the right-hand side of Figure

2↑, the measured angle between two beacons

B1 and

B2, which is denoted

φ12, is always computed as

φ12 = φ2 − φ1 (this choice is natural for a CCW rotating sensor). This is consistent with our measurement considerations and it removes the ambiguity about the locus; however, it requires that beacons are indexed and that the robot is capable to establish the index of any beacon. As a result, the locus is a single circle passing through

R,

B1, and

B2. In addition, the line joining

B1 and

B2 divides the circle into two parts: one for

φ12 < π and the other for

φ12 > π. In the following, we compute the circle parameters.

The circle equation may be derived by using the complex representation of 2-D points (

Argand diagram), and by expressing angles as the argument of complex numbers. In particular, the angle of

(B2 − R) is equal to that of

(B1 − R) plus

φ12. Equivalently

Then, if we substitute

R,

B1,

B2, respectively by

(x + iy),

(x1 + iy1),

(x2 + iy2), we have that

where

i = √( − 1). After lengthy simplifications, we find the locus

which is a circle whose center

{x12, y12} is located at

and whose squared radius equals

The three last equations may also be found in

[29]. The replacement of

φ12 by

π + φ12 in the above equations yields the same circle parameters (see the right-hand side of Figure

2↑), which is consistent with our measurement considerations. For an angular sensor turning in the CW direction, these equations are identical except that one has to change the sign of

cot(.) in equations

5↑ and

5↑.

Hereafter, we use the following notations:

-

Bi is the beacon i, whose coordinates are {xi, yi},

-

R is the robot position, whose coordinates are {xR, yR},

-

φi is the angle for beacon Bi,

-

φij = φj − φi is the bearing angle between beacons Bi and Bj,

-

Tij = cot(φij),

-

Cij is the circle passing through Bi, Bj, and R,

-

cij is the center of Cij, whose coordinates are {xij, yij}:

-

Rij is the radius of Cij, derived from:

All the previous quantities are valid for i ≠ j; otherwise the circle does not exist. In addition, we have to consider the case φij = kπ, k ∈ ℤ. In that case, the sin(.) and cot(.) are equal to zero, and the circle degenerates as the BiBj line (infinite radius and center coordinates). In a practical situation, it means that the robot stands on the BiBj line, and measures an angle φij = π when being between the two beacons, or φij = 0 when being outside of the BiBj segment. These special cases are discussed later.

3.2 Second part of the algorithm: circles intersection

From the previous section, each bearing angle

φij between beacons

Bi and

Bj constraints the robot to be on a circle

Cij, that passes through

Bi,

Bj, and

R (Figure

3↓). The parameters of the circles are given by equations

8↑,

8↑, and

10↑. Common methods use two of the three circles to compute the intersections (when they exist), one of which is the robot position, the second being the common beacon of the two circles. This requires to solve a quadratic system and to choose the correct solution for the robot position

[29]. Moreover the choice of the two circles is arbitrary and usually fixed, whereas this choice should depend on the measured angles or beacons and robot relative configuration to have a better numerical behavior.

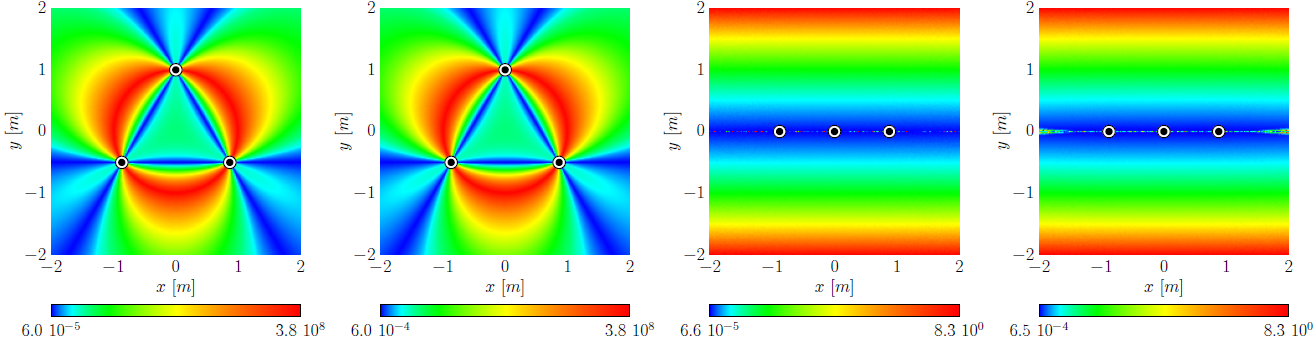

Hereafter, we propose a novel method to compute this intersection, by using all of the three circles, and by reducing the problem to a linear problem. To understand this elegant method, we first introduce the notion of

power center (or

radical center) of three circles. The

power center of three circles is the unique point of equal

power with respect to these circles

[28]. The

power of a point

p relative to a circle

C is defined as

where

{x, y} are the coordinates of

p,

{xc, yc} are the circle center coordinates, and

R is the circle radius. The power of a point is null onto the circle, negative inside the circle, and positive outside the circle. It defines a sort of relative distance of a point from a given circle. The

power line (or

radical axis) of two circles is the locus of points having the same power with respect to both circles

[28]; in other terms, it is also the locus of points at which tangents drawn to both circles have the same length. The power line is perpendicular to the line joining the circle centers and passes through the circle intersections, when they exist. Monge demonstrated that when considering three circles, the three power lines defined by the three pairs of circles are concurring in the power center

[28]. Figure

4↓ shows the power center of three circles for various configurations. The power center is always defined, except when at least two of the three circle centers are equal, or when the circle centers are collinear (parallel power lines).

The third case of Figure

4↑ (right-hand drawing) is particular as it perfectly matches our triangulation problem (Figure

3↑). Indeed, the power center of three concurring circles corresponds to their unique intersection. In our case, we are sure that the circles are concurring since we have

by construction (only two of the three bearing angles are independent), even in presence of noisy angle measurements

φ1,

φ2, and

φ3. It has the advantage that this intersection may be computed by intersecting the power lines, which is a linear problem. The power line of two circles is obtained by equating the power of the points relatively to each circle [given by equation

11↑]. In our problem, the power line of

C12 and

C23 is given by:

where we introduce a new quantity

kij which only depends on

Cij parameters:

In our triangulation problem, we have to intersect the three power lines, that is to solve this linear system:

As can be seen, any of these equations may be obtained by adding the two others, which is a way to prove that the three power lines concur in a unique point: the power center. The coordinates of the power center, that is the robot position is given by

The denominator

D△, which is common to

xR and

yR, is equal to:

which is the signed area between the circle centers, multiplied by two. This result shows that the power center exists, if the circle centers are not collinear, that is if

D△ ≠ 0. The special case (

D△ = 0) is discussed later.

3.3 First (naive) version of the algorithm

A first, but naive, version of our algorithm consists in applying the previous equations to get the robot position. This method is correct; however, it is possible to further simplify the equations. First, note that the squared radii

R2ij only appear in the parameters

kij. If we replace the expression of

R2ij [equation

10↑] in the expression of

kij [equation

16↑], we find, after many simplifications that

which is much simpler than equations

10↑ and

16↑ (no squared terms anymore). In addition, the

1⁄2 factor involved in the circle centers coordinates [equations

8↑ and

8↑] as well as in the parameters

kij [equation

16↑], cancels in the robot position coordinates [see equations

18↑ and

18↑]. This factor can thus be omitted. For now, we use these modified circle center coordinates

{x’ij, y’ij}

and modified parameters

k’ij

3.4 Final version of the algorithm

The most important simplification consists in translating the world coordinate frame into one of the beacons, that is solving the problem relatively to one beacon and then add the beacon coordinates to the computed robot position (like Font-Llagunes

[29] without the rotation of the frame). In the following, we arbitrarily choose

B2 as the origin (

B’2 = {0, 0}). The other beacon coordinates become:

B’1 = {x1 − x2, y1 − y2} = {x’1, y’1} and

B’3 = {x3 − x2, y3 − y2} = {x’3, y’3}. Since

x’2 = 0 and

y’2 = 0, we have

k’12 = 0,

k’23 = 0. In addition, we can compute the value of one

cot(.) by referring to the two other

cot(.) because the three angles are linked [equation

12↑]:

The final algorithm is given in Algorithm

2↓.

Given the three beacon coordinates

{x1, y1},

{x2, y2},

{x3, y3}, and the three angles

φ1,

φ2,

φ3:

-

compute the modified beacon coordinates:

x’1 = x1 − x2, y’1 = y1 − y2,

x’3 = x3 − x2, y’3 = y3 − y2,

-

compute the three cot(.):

T12 = cot(φ2 − φ1), T23 = cot(φ3 − φ2),

T31 = (1 − T12T23)/(T12 + T23),

-

compute the modified circle center coordinates:

x’12 = x’1 + T12 y’1, y’12 = y’1 − T12 x’1,

x’23 = x’3 − T23 y’3, y’23 = y’3 + T23 x’3,

x’31 = (x’3 + x’1) + T31 (y’3 − y’1),

y’31 = (y’3 + y’1) − T31 (x’3 − x’1),

-

compute k’31:

k’31 = x’1x’3 + y’1y’3 + T31(x’1y’3 − x’3y’1),

-

compute D (if D = 0, return with an error):

D = (x’12 − x’23)(y’23 − y’31) − (y’12 − y’23)(x’23 − x’31),

-

compute the robot position {xR, yR} and return:

xR = x2 + (k’31(y’12 − y’23))/(D), yR = y2 + (k’31(x’23 − x’12))/(D).

Algorithm 2 Final version of the ToTal algorithm.

3.5 Discussion

The ToTal algorithm is very simple: computations are limited to basic arithmetic operations and only two cot(.). Furthermore, the number of conditional statements is reduced, which increases its readability and eases its implementation. Among them, we have to take care of the cot(.) infinite values and the division by D, if equal to zero. If a bearing angle φij between two beacons is equal to 0 or π, that is, if the robot stands on the BiBj line, then cot(φij) is infinite. The corresponding circle degenerates to the BiBj line (infinite radius and center coordinates). The robot is then located at the intersection of the remaining power line and the BiBj line; it can be shown that the mathematical limit limTij → ±∞{xR, yR} exists and corresponds to this situation.

Like for other algorithms, our algorithm also has to deal with these special cases, but the way to handle them is simple. In practice, we have to avoid

Inf or

NaN values in the floating point computations. We propose two ways to manage this situation. The first way consists in limiting the

cot(.) value to a minimum or maximum value, corresponding to a small angle that is far below the measurement precision. For instance, we limit the value of the

cot(.) to

±108, which corresponds to an angle of about

±10 − 8 rad; this is indeed far below the existing angular sensor precisions. With this approximation of the mathematical limit, the algorithm remains unchanged. The second way consists in adapting the algorithm when one bearing angle is equal to

0 or

π. This special case is detailed in Algorithm

3↓, in which the indexes

{i, j, k} have to be replaced by

{1, 2, 3},

{3, 1, 2}, or

{2, 3, 1} if

φ31 = 0,

φ23 = 0, or

φ12 = 0 respectively.

Given the three beacon coordinates

{xi, yi},

{xj, yj},

{xk, yk}, and the three angles

φi,

φj,

φk:

-

compute the modified beacon coordinates:

x’i = xi − xj, y’i = yi − yj,

x’k = xk − xj, y’k = yk − yj,

-

compute Tij = cot(φj − φi),

-

compute the modified circle center coordinates:

x’ij = x’i + Tij y’i, y’ij = y’i − Tij x’i,

x’jk = x’k + Tij y’k, y’jk = y’k − Tij x’k,

x’ki = (y’k − y’i), y’ki = (x’i − x’k),

-

compute k’ki = (x’iy’k − x’ky’i),

-

compute D (if D = 0, return with an error):

D = (x’jk − x’ij)(y’ki) + (y’ij − y’jk)(x’ki),

-

compute the robot position {xR, yR} and return:

xR = xj + (k’ki(y’ij − y’jk))/(D), yR = yj + (k’ki(x’jk − x’ij))/(D).

Algorithm 3 Special case φki = 0 ∨ φki = π.

The denominator

D is equal to

0 when the circle centers are collinear or coincide. For non collinear beacons, this situation occurs when the beacons and the robot are concyclic; they all stand on the same circle, which is called the

critic circumference in

[29]. In that case, the three circles are equal as well as their centers, which causes

D = 0 (the area defined by the three circle centers is equal to zero). For collinear beacons, this situation is encountered when the beacons and the robot all stand on this line. For these cases, it is impossible to compute the robot position. This is a

restriction common to all three object triangulation, regardless of the used algorithm

[27, 29, 9].

The value of

D, computed in the final algorithm, is the signed area delimited by the real circle centers, multiplied by height.

|D| decreases to

0 when the robot approaches the critic circle (almost collinear circle center and almost parallel power lines). Therefore, it is quite natural to use

|D| as a reliability measure of the computed position. In the next section, we show that

1⁄|D| is a good approximation of the position error. In practice, this measure can be used as a validation gate after the triangulation algorithm, or when a Kalman filter is used. Finally, it should be noted that the robot orientation

θR may be determined by using any beacon

Bi and its corresponding angle

φi, once the robot position is known:

where

atan2(y, x) denotes the C-like two arguments function, defined as the principal value of the argument of the complex number

(x + iy).

4 Simulations

4.1 Error Analysis

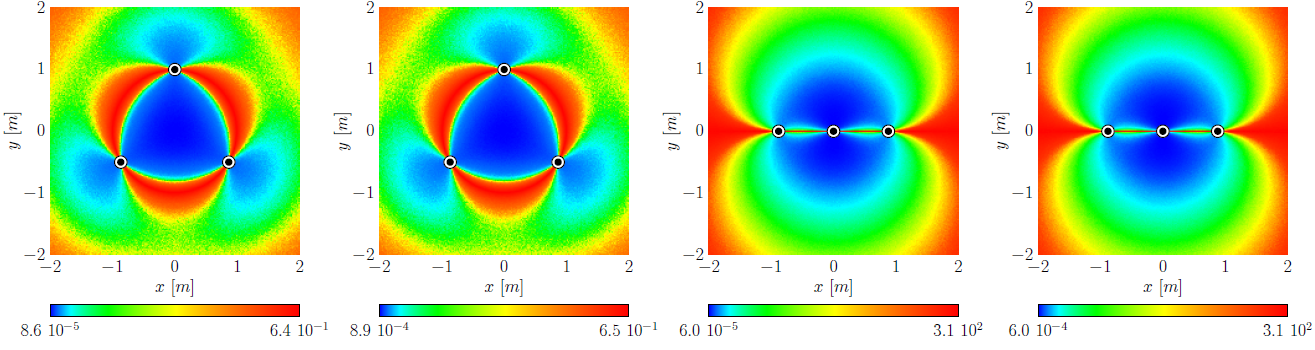

The problem of triangulation given three angle measurements is an exact calculus of the robot pose, even if these angles are affected by noise. Therefore, the sensitivity of triangulation with respect to the input angles is unique and does not depend on the way the problem is solved, nor on the algorithm. This contrasts with multiangulation, which is an overdetermined problem, even with perfect angle measurements. Therefore, we do not elaborate on the error analysis for triangulation, as it has been studied in many papers; the same conclusions, as found in

[2, 34, 29, 3, 8, 43], also yield for our algorithm. However, in order to validate our algorithm and to discuss the main characteristics of triangulation sensitivity, we have performed some simulations. The simulation setup comprises a square shaped area (

4 × 4 m2), with three beacons forming two distinct configurations. The first one is a regular triangle (

B1 = {0 m, 1 m},

B2 = { − 0.866 m, − 0.5 m}, and

B3 = {0.866 m, − 0.5 m}), and the second one is a line (

B1 = {0 m, 0 m},

B2 = { − 0.866 m, 0 m}, and

B3 = {0.866 m, 0 m}). The distance step is

2 cm in each direction. For each point in this grid, we compute the exact angles

φi seen by the robot (the robot orientation is arbitrarily set to

0 °). Then we add Gaussian noise to these angles, with zero mean, and with two different standard deviations (

σ = 0.01 °,

σ = 0.1 °). The noisy angles are then used as inputs of our algorithm to compute the estimated position. The position error

ΔdR is the Euclidean distance between the exact and estimated positions:

The orientation error

ΔθR is the difference between the exact and estimated orientations:

The experiment is repeated

1000 times for each position to compute the standard deviation of the position error

√(var{ΔdR}) and of the orientation error

√(var{ΔθR}). The standard deviations of the position and orientation errors are drawn in Figure

5↓. The beacon locations are represented by black and white dot patterns. The first and second columns provide the result for the first configuration, for

σ = 0.01 °, and

σ = 0.1 °, respectively. The third and fourth columns provide the result for the second configuration, for

σ = 0.01 °, and

σ = 0.1 °, respectively. The first, second, and third rows show the standard deviation of the position error, the standard deviation of the orientation error, and the mean error measure

1⁄|D|, respectively. Note that the graphic scales are not linear. We have equalized the image histograms in order to enhance their visual representation, and to point out the similarities between the position and orientation error, and our new error measure.

Our simulation results are consistent with common three object triangulation algorithms. In particular, in the first configuration, we can easily spot the critic

circumference where errors are large, the error being minimum at the center of this circumference. In the second configuration, this critic circumference degenerates as the line passing through the beacons. In addition, one can see that, outside the critic circumference, the error increases with the distance to the beacons. It is also interesting to note that

1⁄|D| has a similar shape than the position or orientation errors (except in the particular case of collinear beacons). It can be proven [starting from equations

18↑ and

18↑], by a detailed sensitivity analysis of the robot position error with respect to angles, that

where

Δφ is the angle error (assumed to be the same for the three angles), and

f(.) is some function of all the other parameters (see the appendix for details). This confirms our claim that

1⁄|D| can be used as an approximation of the position error. Furthermore, one can observe from the graphic scales, that the position or orientation errors almost evolve linearly with angle errors, when they are small (look at the scale of the different graphics). Note that there is a small discrepancy in the symmetry of the simulated orientation error with respect to the expected behavior. This is explained because we used

B1 to compute the orientation (see equation

26↑). In addition, the histogram equalization emphasizes this small discrepancy.

4.2 Benchmarks

|

|

Algorithm

|

+

|

×

|

⁄

|

√(x)

|

trigo

|

time (s) †

|

|

[46, 47]

|

ToTal 1

|

30

|

17

|

2

|

0

|

2

|

0.163

|

|

[38]

|

Ligas 1

|

29

|

22

|

2

|

0

|

2

|

0.171

|

|

[29]

|

Font-Llagunes 1

|

23

|

17

|

2

|

0

|

5

|

0.223

|

|

[42]

|

Cassini 2

|

19

|

8

|

3

|

0

|

4

|

0.249

|

|

[5]

|

Cohen 1

|

37

|

15

|

3

|

2

|

4

|

0.272

|

|

[2]

|

Easton 2

|

22

|

24

|

1

|

0

|

5

|

0.298

|

|

[9]

|

McGillem 1

|

37

|

18

|

5

|

2

|

8

|

0.340

|

|

[17]

|

Hmam 2

|

29

|

11

|

3

|

3

|

9

|

0.428

|

|

[5]

|

Cohen 2

|

26

|

11

|

3

|

2

|

11

|

0.437

|

|

[34]

|

Esteves 2

|

43

|

14

|

2

|

2

|

11

|

0.471

|

|

[42]

|

Collins 2

|

34

|

10

|

2

|

2

|

11

|

0.485

|

|

[9]

|

McGillem 2

|

29

|

9

|

3

|

2

|

11

|

0.501

|

|

[42]

|

Kaestner 2

|

28

|

10

|

3

|

2

|

11

|

0.504

|

|

[45]

|

Tsukiyama 1

|

52

|

22

|

3

|

5

|

14

|

0.596

|

|

[16]

|

Casanova 1

|

52

|

21

|

4

|

5

|

14

|

0.609

|

|

[32]

|

Tienstra 2

|

33

|

18

|

8

|

3

|

9

|

0.640

|

|

[31]

|

Font-Llagunes 1

|

62

|

25

|

6

|

1

|

8

|

0.648

|

|

[8]

|

Madsen 2

|

38

|

24

|

5

|

3

|

15

|

0.707

|

† For

106 executions on an Intel(R) Core(TM) i7 920 @ 2.67GHz.

1 Geometric circle intersection 2 Trigonometric solution

Table 1 Comparison of various triangulation algorithms to our ToTal algorithm.

We have also compared the execution time of our algorithm to 17 other three object triangulation algorithms similar to ours (

i. e. which work in the whole plane and for any beacon ordering). These algorithms have been introduced in Section

2↑, and have been implemented after the author’s guidelines. Each algorithm has been running

106 times at random locations of the same square shaped area as that used for the error analysis. The last column of Table

1↑ provides the running times on an

Intel(R) Core(TM) i7 920 @ 2.67GHz (6GB RAM, Ubuntu 11.04, GCC 4.5.2). We used the C

clock_gettime function to measure the execution times, in order to yield reliable results under timesharing. It appears that our algorithm is the fastest of all (about

30 % faster than the last best known algorithm of Font-Llagunes

[29], and

5 % faster than the recent algorithm of Ligas

[38]). In addition to the computation times, we have also reported the number of basic arithmetic computations, squared roots, and trigonometric functions used for each algorithm. This may help to choose an algorithm for a particular hardware architecture, which may have a different behavior for basic arithmetic computations, or complex functions such as square root or trigonometric functions. One can see that our algorithm has the minimum number of trigonometric functions, which is clearly related to the times on a classical computer architecture (see Table

1↑). A fast algorithm is an advantage for error simulations, beacon placement, and beacon position optimization algorithms. Note that the algorithm of Ligas also uses the minimum number of trigonometric functions (two

cot(.) computations) like

ToTal, explaining why both algorithms are basically similar in terms of efficiency. However, the algorithm of Ligas does not provide a reliability measure, contrarily to our algorithm

ToTal.

5 Conclusions

Most of the many triangulation algorithms proposed so far have major limitations. For example, some of them need a particular beacon ordering, have blind spots, or only work within the triangle defined by the three beacons. More reliable methods exist, but they have an increasing complexity or they require to handle certain spatial arrangements separately.

This paper presents a new three object triangulation algorithm based on the elegant notion of power center of three circles. Our new triangulation algorithm, which is called ToTal, natively works in the whole plane (except when the beacons and the robot are concyclic or collinear), and for any beacon ordering. Furthermore, it only uses basic arithmetic computations and two cot(.) computations. Comprehensive benchmarks show that our algorithm is faster than comparable algorithms, and simpler in terms of the number of operations. In this paper, we have compared the number of basic arithmetic computations, squared root, and trigonometric functions used for 17 known triangulation algorithms.

In addition, we have proposed a unique reliability measure of the triangulation result in the whole plane, and established by simulations that 1⁄|D| is a natural and adequate criterion to estimate the error of the positioning. To our knowledge, none of the algorithms of the same family does provide such a measure. This error measure can be used to identify the pathological cases (critic circumference), or as a validation gate in Kalman filters based on triangulation.

For all these reasons, ToTal is a fast, flexible, and reliable three object triangulation algorithm. Such an algorithm is an excellent choice for many triangulation issues related to the performance or optimization, such as error simulations, beacon placement or beacon position optimization algorithms. It can also be used to understand the sensitivity of triangulation with respect to the the input angles. A fast and inexpensive algorithm is also an asset to initialize a positioning algorithm that internally relies on a Kalman filter.

Appendix

In this section, we detail the sensitivity analysis of the computed position. We start by computing the derivative of

xR with respect to the first angle

φ1

where

g1(.) is some function of all the other parameters. Similar results yield for the derivative of

xR with respect to the second and third angles,

φ2 and

φ3 respectively

where

g2(.) and

g3(.) are some functions of all the other parameters. The total differential of

xR with respect to

φ1,

φ2, and

φ3 is given by

where we assumed that the three infinitesimal increments are equal

Δφ = Δφ1 = Δφ2 = Δφ3. A similar result yields for the total differential of

yR

where

h(.) is some function of all the other parameters. Finally, we can compute

ΔdR as follows

where

f(.) is some function of all the other parameters.

References

[1] A. Bais, R. Sablatnig, J. Gu. Single landmark based self-localization of mobile robots. Third Canadian Conference on Computer and Robot Vision (CRV), 2006.

[2] A. Easton, S. Cameron. A Gaussian Error Model for Triangulation-Based Pose Estimation Using Noisy Landmarks. IEEE Conference on Robotics, Automation and Mechatronics:1-6, 2006. URL http://dx.doi.org/10.1109/RAMECH.2006.252663.

[3] A. Kelly. Precision Dilution in Triangulation Based Mobile Robot Position Estimation. Intelligent Autonomous Systems, 8:1046-1053, 2003.

[4] A. Siadat, S. Vialle. Robot Localization, Using P-Similar Landmarks, Optimized Triangulation and Parallel Programming. IEEE International Symposium on Signal Processing and Information Technology, 2002.

[5] C. Cohen, F. Koss. A Comprehensive Study of Three Object Triangulation. Mobile Robots VII, 1831:95-106, 1992. URL http://dx.doi.org/10.1117/12.143782.

[6] C. Lee, Y. Chang, G. Park, J. Ryu, S.-G. Jeong, S. Park, J.W. Park, H.C. Lee, K.-S. Hong, M.H. Lee. Indoor positioning system based on incident angles of infrared emitters. Conference of the IEEE Industrial Electronics Society (IECON), 3:2218-2222, 2004.

[7] C.B. Madsen, C.S. Andersen, J.S. Sorensen. A robustness analysis of triangulation-based robot self-positioning. International Symposium on Intelligent Robotic Systems:195-204, 1997.

[8] C.B. Madsen, C.S. Andersen. Optimal landmark selection for triangulation of robot position. Robotics and Autonomous Systems, 23(4):277-292, 1998. URL http://dx.doi.org/10.1016/S0921-8890(98)00014-1.

[9] C.D. McGillem, T.S. Rappaport. A Beacon Navigation Method for Autonomous Vehicles. IEEE Transactions on Vehicular Technology, 38(3):132-139, 1989. URL http://dx.doi.org/10.1109/25.45466.

[10] C.D. McGillem, T.S. Rappaport. Infra-red location system for navigation of autonomous vehicles. IEEE International Conference on Robotics and Automation (ICRA), 2:1236-1238, 1988. URL http://dx.doi.org/10.1109/ROBOT.1988.12230.

[11] D. Avis, H. Imai. Locating a robot with angle measurements. Journal of Symbolic Computation, 10(3-4):311-326, 1990.

[12] D. Kortenkamp. Perception for mobile robot navigation: A survey of the state of the art. 1994.

[13] D. Sinriech, S. Shoval. Landmark configuration for absolute positioning of autonomous vehicles. IIE Transactions, 32(7):613-624, 2000.

[14] E. Demaine, A. Lopez-Ortiz, J. Munro. Robot Localization without Depth Perception. Proceedings of Scandinavian Workshop on Algorithm Theory (SWAT), 2368:177-194, 2002.

[15] E. Zalama, S. Dominguez, J. Gomez, J.R. Peran. Microcontroller based system for 2D localisation. Mechatronics, 15(9):1109-1126, 2005. URL http://dx.doi.org/10.1016/j.mechatronics.2005.05.001.

[16] E.Z. Casanova, S.D. Quijada, J.G. García-Bermejo, J.R.P. González. A New Beacon-based System for the Localization of Moving Objects. IEEE International Conference on Mechatronics and Machine Vision in Practice, 2002. URL http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.7.1615.

[17] H. Hmam. Mobile Platform Self-Localization. Information, Decision and Control:242-247, 2007. URL http://dx.doi.org/10.1109/IDC.2007.374557.

[18] H. Hu, D. Gu. Landmark-based Navigation of Industrial Mobile Robots. International Journal of Industry Robot, 27(6):458-467, 2000. URL http://dx.doi.org/10.1108/01439910010378879.

[19] H. Sobreira, A.P. Moreira, J. Esteves. Characterization of Position and Orientation Measurement Uncertainties in a Low-Cost Mobile Platform. Portuguese Conference on Automatic Control (CONTROLO):635-640, 2010.

[20] H. Sobreira, A.P. Moreira, J. Esteves. Low cost self-localization system with two beacons. International Conference on Mobile Robots and Competitions (ROBOTICA):73-77, 2010.

[21] I. Loevsky, I. Shimshoni. Reliable and efficient landmark-based localization for mobile robots. Robotics and Autonomous Systems, 58(5):520-528, 2010. URL http://dx.doi.org/10.1016/j.robot.2010.01.006.

[22] I. Shimshoni. On Mobile Robot Localization From Landmark Bearings. IEEE Transactions on Robotics and Automation, 18(6):971-976, 2002. URL http://dx.doi.org/10.1109/TRA.2002.805663.

[23] J. Borenstein, H. Everett, L. Feng, D. Wehe. Mobile Robot Positioning - Sensors and Techniques. Journal of Robotic Systems, 14(4):231-249, 1997.

[24] J. Borenstein, H. Everett, L. Feng. Where am I? Systems and Methods for Mobile Robot Positioning. 1996.

[25] J. Borenstein, L. Feng. UMBmark: A Benchmark Test for Measuring Odometry Errors in Mobile Robots. Society of Photo-Instrumentation Engineers, 1001:113-124, 1995. URL http://dx.doi.org/10.1117/12.228968.

[26] J. Esteves, A. Carvalho, C. Couto. An improved version of the Generalized geometric triangulation algorithm. European-Latin-American Workshop on Engineering Systems, 2006.

[27] J. Esteves, A. Carvalho, C. Couto. Generalized Geometric Triangulation Algorithm for Mobile Robot Absolute Self-Localization. IEEE International Symposium on Industrial Electronics (ISIE), 1:346-351, 2003.

[28] J.-D. Eiden. Geometrie analytique classique. Calvage & Mounet, 2009.

[29] J.M. Font-Llagunes, J.A. Batlle. Consistent triangulation for mobile robot localization using discontinuous angular measurements. Robotics and Autonomous Systems, 57(9):931-942, 2009. URL http://dx.doi.org/10.1016/j.robot.2009.06.001.

[30] J.M. Font-Llagunes, J.A. Batlle. Mobile Robot Localization. Revisiting the Triangulation Methods. International IFAC Symposium on Robot Control, 8:340-345, 2006.

[31] J.M. Font-Llagunes, J.A. Batlle. New method that solves the three-point resection problem using straight lines intersection. Journal of Surveying Engineering, 135(2):39-45, 2009. URL http://dx.doi.org/10.1061/(ASCE)0733-9453(2009)135:2(39).

[32] J.M. Porta, F. Thomas. Simple Solution to the Three Point Resection Problem. Journal of Surveying Engineering, 135(4):170-172, 2009. URL http://dx.doi.org/10.1061/(ASCE)0733-9453(2009)135:4(170).

[33] J.M. Sanchiz, J. Badenas, F. Pla. Control system and laser-based sensor design of an automonous vehicle for industrial environments. Society of Photo-Instrumentation Engineers, 5422(1):608-615, 2004. URL http://dx.doi.org/10.1117/12.565046.

[34] J.S. Esteves, A. Carvalho, C. Couto. Position and Orientation Errors in Mobile Robot Absolute Self-Localization Using an Improved Version of the Generalized Geometric Triangulation Algorithm. IEEE International Conference on Industrial Technology (ICIT):830-835, 2006. URL http://dx.doi.org/10.1109/ICIT.2006.372345.

[35] K. Briechle, U. Hanebeck. Localization of a Mobile Robot Using Relative Bearing Measurements. IEEE Transactions on Robotics and Automation, 20(1):36-44, 2004.

[36] M. Betke, L. Gurvits. Mobile Robot Localization Using Landmarks. IEEE Transactions on Robotics and Automation, 13(2):251-263, 1997. URL http://dx.doi.org/10.1109/70.563647.

[37] M. Brkic, M. Lukic, J. Bajic, B. Dakic, M. Vukadinovic. Hardware realization of autonomous robot localization system. International Convention on Information and Communication Technology, Electronics and Microelectronics (MIPRO):146-150, 2012.

[38] M. Ligas. Simple Solution to the Three Point Resection Problem. Journal of Surveying Engineering, 139(3):120-125, 2013. URL http://dx.doi.org/10.1061/(ASCE)SU.1943-5428.0000104.

[39] M. Lukic, B. Miodrag, J. Bajic. An Autonomous Robot Localization System Based on Coded Infrared Beacons. Research and Education in Robotics - EUROBOT 2011, 161:202-209, 2011. URL http://dx.doi.org/10.1007/978-3-642-21975-7_18.

[40] O. Tekdas, V. Isler. Sensor Placement Algorithms for Triangulation Based Localization. IEEE International Conference on Robotics and Automation (ICRA):4448-4453, 2007. URL http://dx.doi.org/10.1109/ROBOT.2007.364164.

[41] O. Tekdas, V. Isler. Sensor Placement for Triangulation-Based Localization. IEEE Transactions on Automation Science and Engineering, 7(3):681-685, 2010. URL http://dx.doi.org/10.1109/TASE.2009.2037135.

[42] R. Burtch. Three point resection problem. Surveying Engineering Department, Ferris State University, 2005.

[43] S. Shoval, D. Sinriech. Analysis of landmark configuration for absolute positioning of autonomous vehicles. Journal of Manufacturing Systems, 20(1):44-54, 2001.

[44] T. Heath. Euclid: the thirteen books of The Elements. Dover Publications, 1956.

[45] T. Tsukiyama. Mobile Robot Localization from Landmark Bearings. World Congress on Fundamental and Applied Metrology:2109-2112, 2009. URL http://www.imeko.org/publications/wc-2009/IMEKO-WC-2009-TC17-084.pdf.

[46] V. Pierlot, M. Van Droogenbroeck, M. Urbin-Choffray. A new three object triangulation algorithm based on the power center of three circles. Research and Education in Robotics (EUROBOT), 161:248-262, 2011. URL http://hdl.handle.net/2268/89435.

[47] V. Pierlot, M. Van Droogenbroeck. A New Three Object Triangulation Algorithm for Mobile Robot Positioning. IEEE Transactions on Robotics, 30(3):566-577, 2014. URL http://dx.doi.org/10.1109/TRO.2013.2294061.

[48] V. Pierlot, M. Van Droogenbroeck. A simple and low cost angle measurement system for mobile robot positioning. Workshop on Circuits, Systems and Signal Processing (ProRISC):251-254, 2009. URL http://orbi.ulg.ac.be/handle/2268/30550.